Data Engineering

Data and analysis are used regularly to help companies drive productivity, obtain greater organizational perspectives, and eventually produce more sales. However, the effect of data analysis extends way beyond the corporate field and aims to address some of the most important human problems. From the prevention of blindness and the treatment of opioid and alcohol abuse to the battle against hunger, data science is being used not only as a corporate instrument but also for the common benefit of society.

The overwhelming amount of data produced every day through society is commonly touted as a game-changer for science, technical advancement, and even policy-making. However, the huge data can't convert the world unless it's synthesized and analyzed into public value tools.

Our Nearshore Procurement Service provides excellent talent and staff to develop these dynamic tools optimally and productively.

The Big Data foremost trends in 2020 are:

NoSQL integrates a wide variety of separate database technologies that are being built to construct modern applications. It portrays a non-SQL or non-relational database that offers a mechanism for data accumulation and retrieval. They are deployed in real-time online apps and big data analytics.

It stores unstructured data and provides faster performance and versatility when working with a wide variety of data types. Examples include MongoDB, Redis, and Cassandra.

R is a programming language and an open-source project. It is free software that is commonly used for mathematical computation, simulation, coherent development of environments such as Eclipse and Visual Studio to help collaboration.

Data Lakes refers to a centralized archive for the collection of all data types in terms of structured and unstructured data at any size.

In the course of data accumulation, data should be saved as it is, without converting it into organized data and conducting a wide variety of data analytics from dashboard and data visualization to massive data transformation, real-time analytics, and deep learning for increased market intervention. (Refer to Blog: 5 Different Data Visualization Styles in Business Analytics)

Organizations that use data lakes may be able to beat their rivals, new forms of research will be carried out, such as machine learning from new sources of log files, social media, and click-stream info, and even IoT devices will freeze in data lakes.

It allows companies to know and adapt to better prospects for stronger market development by bringing and engaging clients, retaining competitiveness, effectively maintaining devices, and making educated decisions.

A subpart of Big Data Analytics, which attempts to forecast future activity from past data. It uses machine learning tools, data analysis, and predictive simulation, and some mathematical methods to predict future events.

The science of predictive analytics produces future inferences with a convincing degree of accuracy. With predictive analytics software and templates, every firm deploys previous and current data to track patterns and habits that could emerge at a given time. In this blog, you can review the definition of predictive modeling in machine learning.

For example, to explore the relationship between different trending parameters. These models are designed to determine the contribution or risk offered by a given range of options.

With built-in features for streaming, SQL, machine learning, and graphics processing support, Apache Spark is the fastest and most popular generator for big data transformation. It supports major big data languages, including Python, R, Scala, and Java.

The Hadoop was implemented because of the spark, the key aim of data processing is speed. It decreases the lag time between the timing of the interrogation and the completion of the program. The spark is used in Hadoop primarily for storage and processing. It's a hundred times better than MapReduce.

Prescriptive Analytics offers advice to businesses about what they should do to produce optimal results. For example, a business may be told that the borderline of a product is expected to decline, so prescriptive analytics may help to analyze different causes in response to market shifts and predict the most desirable outcomes.

Where descriptive and statistical analysis is involved, but relies on useful insights into data monitoring and offers the right approach for customer loyalty, market income, and operating performance.

The in-memory database (IMDB) is located in the main memory of the machine (RAM) and is managed by the in-memory database management system. Previously, standard records are housed on hard drives.

If you consider, traditional disk-based databases are designed for the focus of the block-adapting devices where the data is written and interpreted. Instead, as one section of the database corresponds to another part, it feels the need for separate blocks to be read on the disk. This is a non-in-memory database problem where interlinked database relations are tracked using direct indicators.

In-memory databases are designed to reach a minimal period by omitting the access disk specifications. However, because all data is stored and managed entirely in the main memory, there is a strong risk that the data will be lost due to a process or server malfunction.

Blockchain is the allocated blockchain technology that carries Bitcoin digital currencies with a special feature of protected data, once written, it can never be removed or updated later.

It is a highly protected environment and a perfect option for different implementations of big data in the accounting, investment, insurance, healthcare, retail, etc. sectors.

Blockchain technology is still in the process of growth, however, several traders from different organizations such as AWS, IBM, Microsoft, and startups, have tried several experiments to implement new solutions to blockchain technology construction.

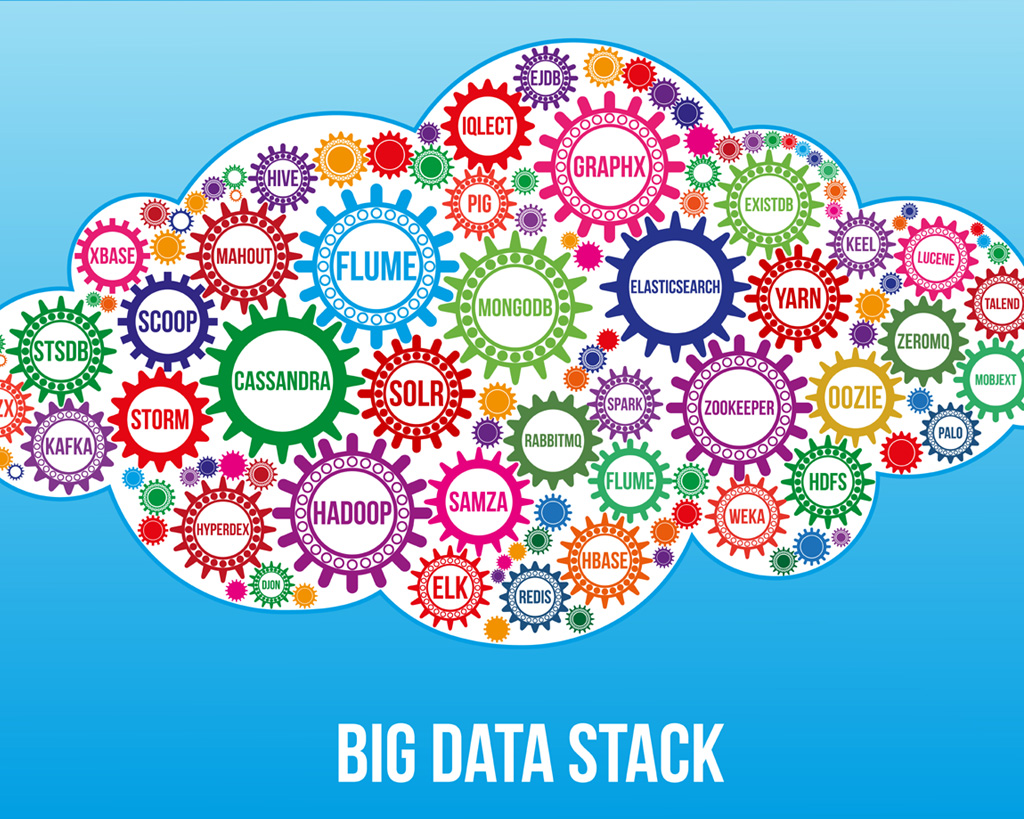

The Hadoop ecosystem is a forum that aims to address the problems of big data. It contains several various elements and resources, such as ingesting, preserving, evaluating, and managing within it.

The majority of resources in the Hadoop ecosystem are complementary to its various components, including HDFS, YARN, MapReduce, and General.

The Hadoop community contains all Apache Open Source projects and a wide range of commercial tools and solutions. Some of the well-known open-source examples are Spark, Bee, Pig, Sqoop, and Oozie.